Guide

How to build accurate agentic analytics systems — why enterprise context is king

Historically, extracting knowledge from data required technical specialists to hand-code custom SQL queries for each type of question they wanted to answer. The 1990s brought business intelligence (BI) dashboards that made those answers more accessible, but this new workflow still required an analyst to know the right questions to ask and build the necessary queries and views.

Self-service BI tools like Tableau, Qlik, and Microsoft Power BI began emerging in the early 2000s, shifting business intelligence strategy toward analyst-driven exploration without the need for significant IT support. The arrival of large language models (LLMs) and generative AI in the early 2020s made AI-assisted conversational analytics (“AI copilots”) possible.

Today, agentic analytics are enabling the next step in this evolution: a shift from AI-assisted to more autonomous systems that can explore and reason with data, monitoring your business data continuously, surfacing insights proactively, and even fixing errors automatically. Where data analysis was previously descriptive (telling you what had happened) and predictive (projecting what was likely to happen), today’s agentic analytics systems are proactive and executable (sensing issues and autonomously repairing them).

In this article, you’ll learn what agentic analytics is, how it works, central challenges, and key capabilities — closing with a look at the architectural building blocks that make it all possible.

What is agentic analytics?

Agentic analytics is a goal-oriented, AI business intelligence system composed of autonomous AI agents that can reason over your data, monitor your business continuously, and surface insights before you even need to ask for them.

In their 2026 Agentic Analytics Market Guide, Gartner emphasizes that agentic analytics works “across the data-to-insight workflow, orchestrating tasks either semiautonomously or autonomously toward stated goals that support, augment or automate insights.”

Across industry definitions, you’ll notice that “goal” is a key word. That’s because an agentic analytics system is organized around goals rather than queries. A user or business process defines an objective, and the system works toward it. The goal-oriented nature of this type of system is the key feature separating agentic analytics from conversational BI, which you can achieve in an LLM or AI Copilot.

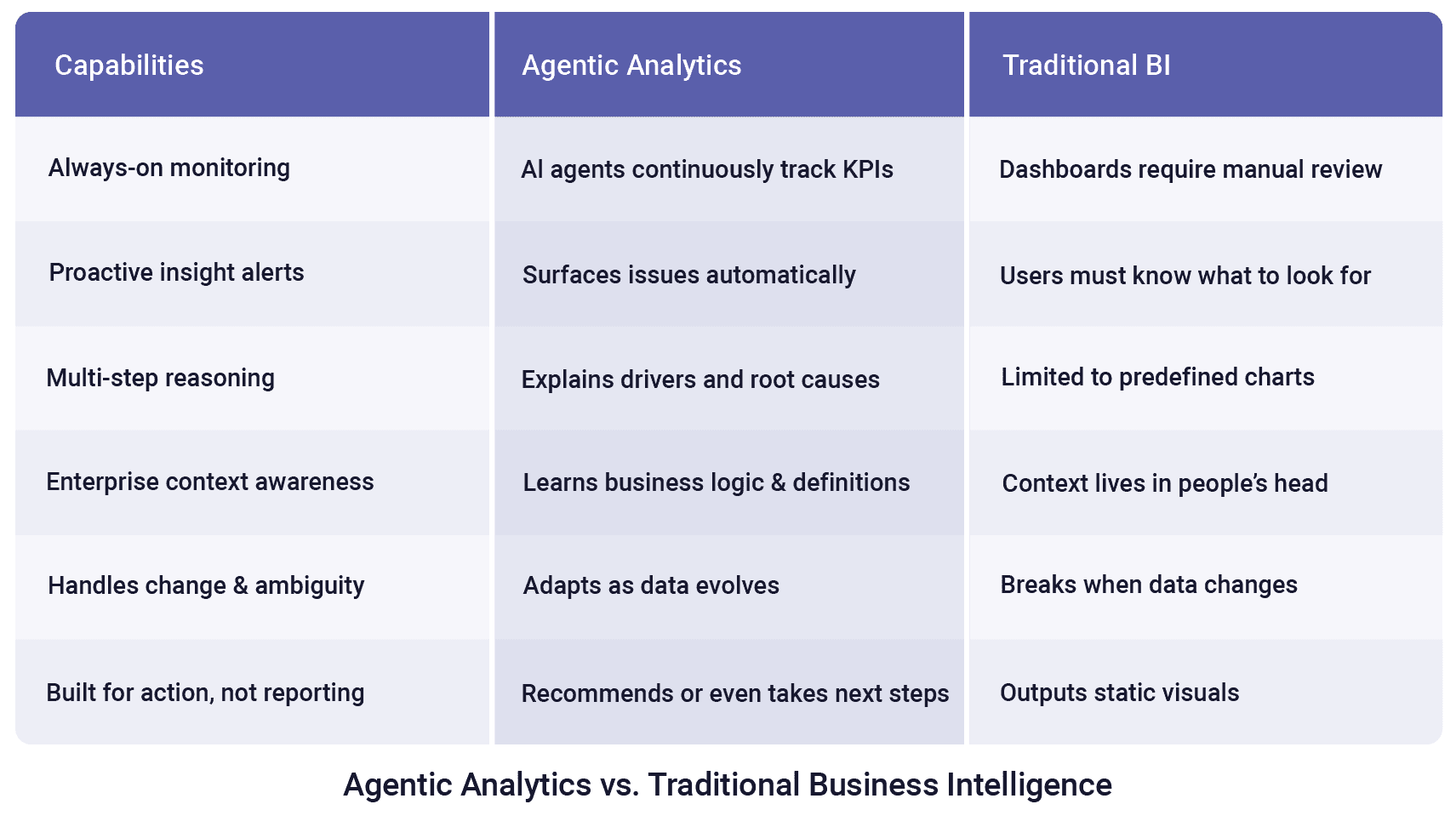

Agentic analytics systems continuously monitor your KPIs, automatically surface issues as they emerge, and explain the drivers and root causes behind them. Unlike traditional BI tools that are limited to predefined charts and static visuals, they learn your business logic and definitions, adapt as your data evolves, and go beyond reporting to recommend (or even take) next steps.

Overview of the differences between agentic analytics and traditional business intelligence

The role of AI agents in agentic analytics

AI agents are autonomous AI systems that can take actions to achieve goals. Today, when we talk about AI agents, we mean systems based on LLMs that have been fine-tuned and/or prompted to use tools. This allows these systems to do things that go beyond simply generating text.

For instance, early LLMs were not good at counting or math. A familiar example of this was asking an LLM, “how many ‘r’s are there in ‘strawberry’?” LLMs can be surprisingly bad at answering these types of questions. However, it turns out that LLMs are great at generating not only human languages but also computer languages. The latest LLMs have special reasoning mechanisms that break words down into individual letters or use external tools. For example, the LLM might automatically write Python code to count the letters. If the LLM has access to a Python interpreter to actually run the code, it is considered agentic.

Similarly, when an LLM searches the web, uses a calculator tool to solve an arithmetic problem, queries a database, or generates a structured call to a REST API, that is also agentic AI.

In a data analytics context, agentic AI might look like an LLM generating a SQL query and querying your data to get the answer to a question like this: “Which of our sales regions grew the most last quarter?” After identifying that your Northeast region grew by 12%, the agent can benchmark this performance against the industry average by using a web search tool. The agent might access a CRM tool to identify low-performing sales representatives with high lead volume but low conversion rates, and recommend that they attend a “Closing Skills” workshop. Tools give AI agents access to capabilities and resources that general-purpose LLMs don’t inherently have.

Multi-step agentic AI reasoning loop

Whenever LLMs use tools, we call them agentic. However, the true power of Agentic AI is its ability to reason across multiple tools and steps in order to achieve a goal. These LLMs are fine-tuned for reasoning tasks, including a list of available tools along with descriptions of what they do and how to use them. The AI agent uses those descriptions to reason about when to call the right tool and how to interpret their outputs.

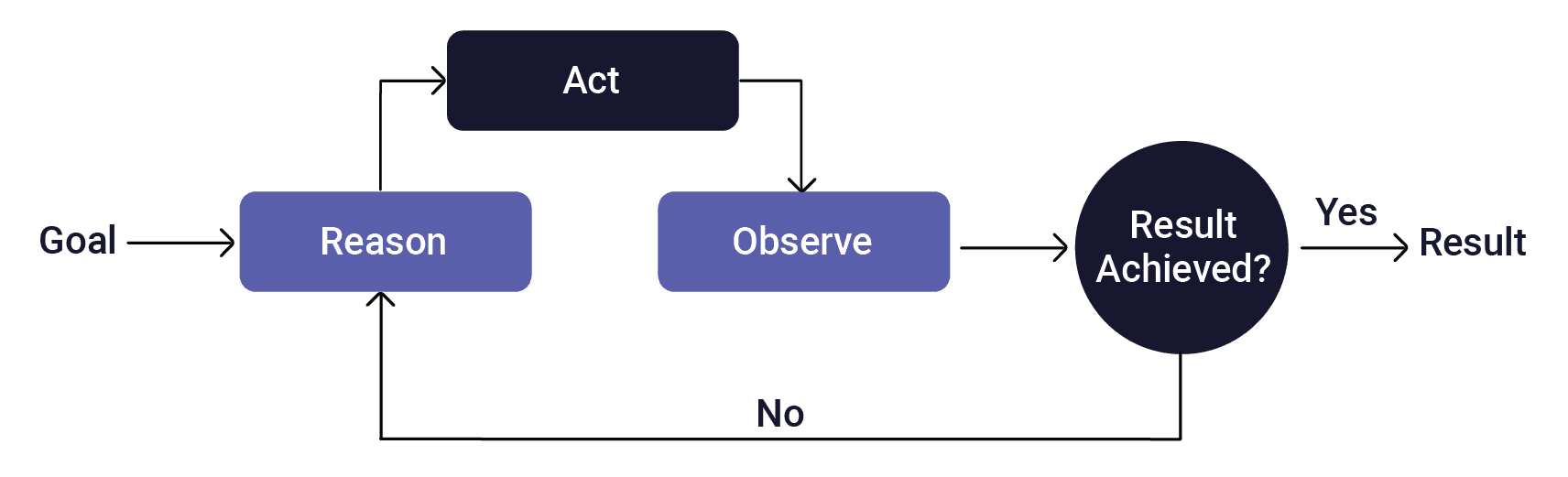

These agents are deploying a Reason → Act → Observe → Iterate loop, as shown below.

Agentic AI’s Reason → Act → Observe → Iterate loop

In the Reason step, the agent considers what the next step must be to move toward achieving its goal, review the tools available to it, and make a plan to use the tool. The agent then takes action by calling the tool with the appropriate inputs. In the Observe step, the model reflects on the outputs from the tool, and whether it now has the final result that it needs. If so, it will assemble and output its response. If not, it will go back to reasoning to figure out what its next step should be.

While the major LLM chatbots today (like Claude, Gemini, and ChatGPT) do have some agentic capabilities, such as web search and code execution, these are general-purpose tools. AI copilots for analytics are more purpose-built and also have some basic agentic capabilities (most notably, the ability to generate and execute SQL queries).

True agentic analytics, on the other hand, go beyond just answering your queries, providing proactive insight generation through goal-oriented continuous monitoring. And most importantly, agentic analytics systems can be grounded in persistent and evolving context about your business definitions, data relationships, and organizational logic. This helps ensure accuracy at enterprise scale.

What is AI Context?

General-purpose LLMs today are great at reasoning over data. So why do you need a special agentic AI system for analytics? In a word: context.

AI Context is the background information, user history, instructions, and data provided to a model to generate relevant, accurate, and coherent responses, functioning as its working memory.

Every enterprise data environment is a web of ambiguity. There are subtleties in your business data, definitions, and team-specific use cases that your analytic AI needs to understand in order to deliver an accurate response.

For agentic analytics, AI context falls into the following categories:

Data Context

The structural knowledge that tells the LLM what tables exist, how they join, and what the columns mean. It can include which data sources are reliable and what edge cases cause anomalies.

Business Context

The semantic knowledge that captures how the organization defines its metrics, including how definitions have changed over time and how they differ between departments and teams. It can direct the AI to trusted data sources based on the question being asked.

Historical Context

The institutional memory of what questions have been asked before. From this, the AI can be shown examples of anomalies investigated, their outcomes, and the basis of the decisions made. It also captures what the AI should not repeat.

Presentational Context

The communication judgment enables the LLM to be tailored to the user who asks the question. A user might not be authorized to access certain data, or they may prefer numbers over narrative. Insights can be framed to help make decisions, for example, by always providing a benchmark by which a trend can be compared.

Why AI context matters?

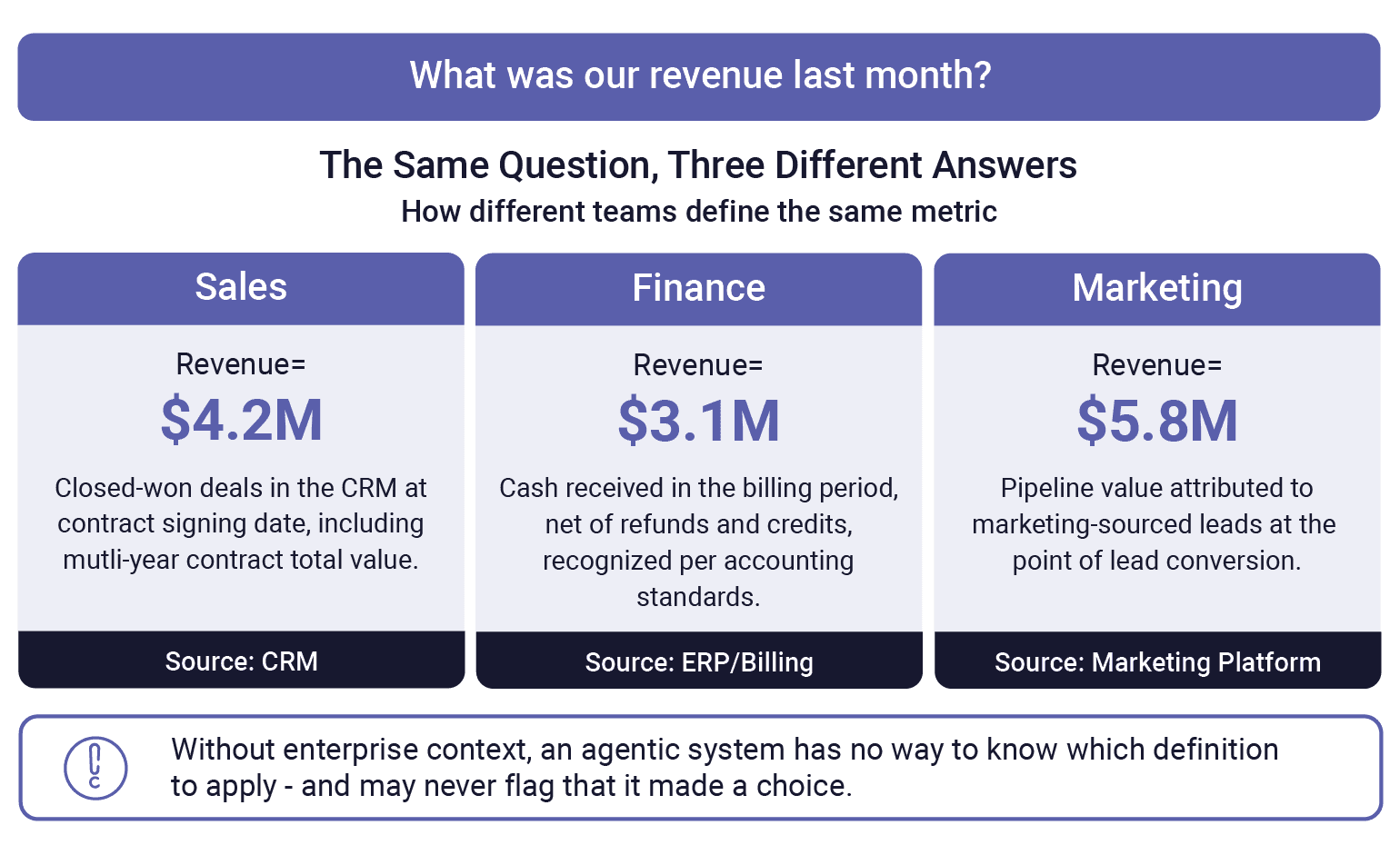

There may be ambiguities in how different teams use terms like “revenue,” “pipeline,” or “active customer.” And there are likely naming inconsistencies across systems (e.g., “customer” vs. “client” vs. “user”). An agent without this context may silently choose the wrong interpretation of an ambiguous term and have difficulty creating the appropriate joins across differently named fields.

A simple term like “revenue” may be interpreted differently by different business units

In addition to the business context, maintaining usage history as context for future queries allows an agent to learn. For example, it can let an agent learn what a particular user actually intends when a question can be interpreted multiple ways and how individuals and teams prefer that answers be framed and delivered.

While some LLM context failures may result in a response that highlights uncertainty, ambiguity or inaccuracy, many times the LLM will respond with a clean, confident, well-formatted answer that is simply wrong. That's harder to catch, and therefore can influence real decisions and adverse actions before anyone notices.

To make the problem even more challenging, context is very likely a moving target. As metrics are redefined, schemas evolve and tribal knowledge shifts with team and user changes, context too must evolve — meaning you can’t set it and forget it. This adds to the complexity of an already complex enterprise data infrastructure.

LLMs can’t learn context automatically. Context needs to be owned, versioned, and governed like any other critical business asset. This is one instance where human-in-the-loop isn’t optional. Because when an agentic system makes or supports a business decision based on the wrong definition of a key metric, someone needs to be accountable.

With the right context, an agentic analytic system can unlock the full potential of your data: answering questions in plain language, monitoring your data continuously, decomposing complex business questions into verifiable reasoning steps, and improving with every interaction. The sections below explore each of these capabilities in turn.

Key capabilities of agentic analytics

Analyzing structured, semi-structured and unstructured data

A big advantage of using an AI tool for data analysis is the fact that users can ask questions in plain business language without needing to know SQL, schema names, or table structures. The agentic system can interpret ambiguous or underspecified questions using your enterprise context, as described above.

An agentic system can also use natural language to explain its reasoning and outputs in plain language, making results interpretable and auditable by non-technical stakeholders.

In addition, an LLM-based system can act on free-text fields in semi-structured data. For example, a system can perform a sentiment analysis on comments or extract and categorize the root causes mentioned in customer support tickets to identify which product issues may be driving subscription cancellations.

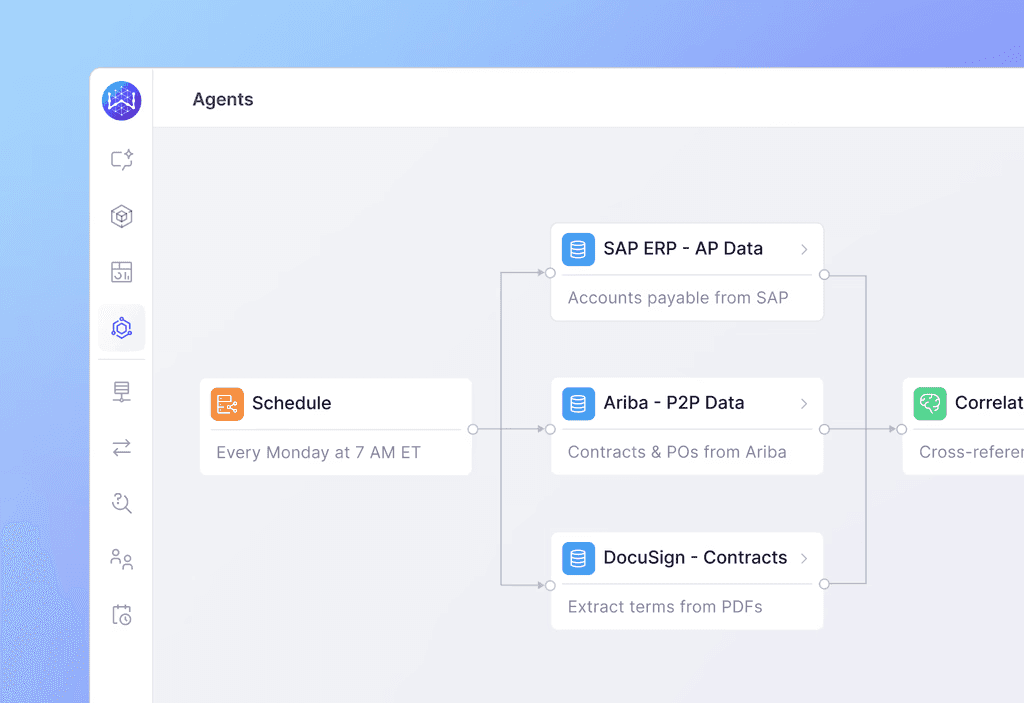

The system can also reason over entirely unstructured documents that sit outside of standard databases, such as supplier contracts, RFIs, and legal agreements. Instead of a manual audit of hundreds of PDF files, a procurement lead can ask the system to "Identify which of our active service contracts include a price-escalation clause tied to the Consumer Price Index," or "Summarize the specific sustainability commitments made by our top ten strategic vendors in their latest proposals." By synthesizing insights from these documents alongside structured spend data, the agent provides a holistic view of compliance and risk that was previously buried in disparate folders.

WisdomAI procurement agents integrate unstructured data sources with structured data

Autonomous data exploration and proactive insight generation

A big difference between agentic analytics and prior generations of BI tools is that you can give an agentic system a goal, and it can continuously monitor your data and surface insights proactively. For example, an agentic system can automatically detect that conversion rates have dropped, investigate the contributing factors across your marketing, product, and sales data, and surface a root cause analysis without anyone needing to ask.

Agents can also surface trends and anomalies that might not be obvious through traditional dashboard monitoring, such as detecting a gradual erosion in customer satisfaction scores across a specific product tier that might otherwise be masked by steady or improving scores across the rest of the portfolio. Another example is recognizing that a decline in sales rep productivity is correlated with a recent increase in customer relationship management (CRM) data entry requirements, something that would be hard for analysts to notice from looking at sales performance and operational metrics in separate dashboards.

Of course, agentic systems can also handle more straightforward monitoring of your key performance indicators (KPIs), alerting you if they cross thresholds or when anomalies are detected, and this can be informed by your business context to understand seasonal patterns, expected ranges, and business-specific definitions of “normal.” Unlike traditional alerting, when an action is triggered, an agentic system can automatically begin investigating why, rather than simply notifying someone that a number “looks wrong.”

Multi-step reasoning

Complex business questions are rarely resolved by a single query. Agentic systems can decompose a goal into sub-questions, explore relevant data, and synthesize findings without human direction at each step.

For example, consider this question: “Why did we miss our revenue target last quarter?” An agentic system might break that down to the following steps:

Retrieve total revenue by month for the past four quarters and confirm the shortfall.

Break down by product line, region, and sales rep to identify where the gap is concentrated.

Compare pipeline coverage in the affected segments against the same period in prior quarters.

Retrieve multiple indicators of deal velocity (e.g., average sales cycle length, stage progression rates, and close rates) in those segments to identify where deals are stalling.

Cross-reference with marketing data to check whether lead volume or quality changed in the same period.

Check whether any pricing or discount policy changes coincide with the timing of the decline.

Synthesize findings into a ranked list of contributing factors with supporting data for each.

Or consider the steps that might be required to answer this question: “Which customers are most at risk of cancellation in the next 90 days?”

Pull product usage data for all active customers and calculate engagement trends over the past 60 days.

Identify accounts with declining usage, unpaid invoices, or unresolved support tickets.

Cross-reference with CRM notes and recent communication history to flag accounts with no recent outreach.

Run sentiment analysis on recent support ticket text to surface accounts expressing frustration.

Score and rank accounts by combined risk signal across all four dimensions.

Generate a prioritized outreach list with a summary of the key risk factors for each account.

In practice, each of these steps may itself decompose into several lower-level actions (querying tables, joining datasets, performing calculations on results, etc.). All of these should be executed and logged by the agent both for user trust and to support governance and audit requirements.

It should be clear that the quality of this reasoning depends heavily on the context layer to know which sources are relevant, how to combine them, and which business rules apply at each step. WisdomAI is an agentic analytics platform built specifically around this problem. The Wisdom Adaptive Context Engine (ACE) supplies the right schemas, metrics, relationships, and business rules at each reasoning step and improve the accuracy of agentic analytics.

Continuous learning

Agentic systems can improve with use. Every query, correction, follow-up question, and piece of direct feedback represents a signal that can improve the system’s understanding of your enterprise data. WisdomAI leverages this feedback to continuously refine the context it operates on — improving metric definitions, resolving ambiguities, and learning user preferences over time — so that the system becomes more accurate and effective the more it is used.

As enterprise context evolves, there is a risk that an update that improves the agentic system's handling of one type of question while inadvertently degrading others. WisdomAI guards against this through a built-in quality assurance mechanism that benchmarks system performance against a curated set of validated queries (developed collaboratively with customers during onboarding) to catch any such regressions before they reach production.

The architecture behind agentic analytics

Now that we’ve covered what agentic analytics can do, let’s take a look at how it’s built. An agentic analytics system comprises the following building blocks:

Enterprise data layer

Enterprise context layer

Agentic tools and orchestration

LLM engine

Insights delivery

Feedback loop

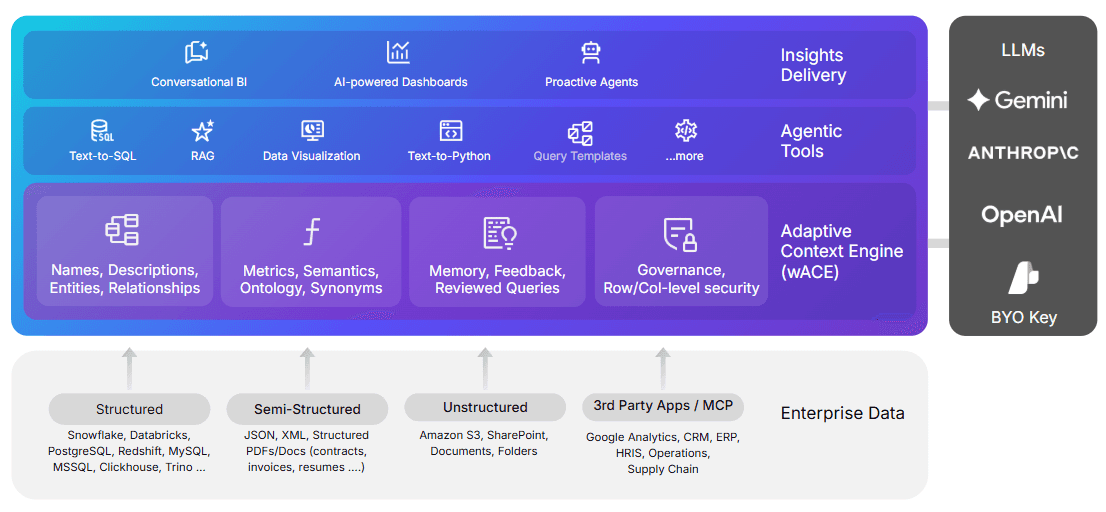

The image below shows how these building blocks all come together in WisdomAI’s Agentic Analytics tool. It illustrates the different types of enterprise data, the context that the adaptive context engine learns and maintains, some of the agentic tools offered, and the three types of insight delivery supported. WisdomAI uses a bring-your-own-key approach to plugging in an LLM engine, giving the enterprise control over access to its data. Note that the user interactions and the resulting feedback loop are not illustrated here.

WisdomAI’s agentic analytics architecture

In the sections below, we’ll describe each of these layers in more detail.

Enterprise data layer

The enterprise data layer is the set of resources the system can query, analyze, and reason over. An enterprise’s primary data typically lives in a data warehouse or lakehouse (Snowflake, BigQuery, Databricks, or other similar platform), but additional sources of data like CRM systems, marketing platforms, support tools, finance systems, product analytics, and other unstructured data sources also hold data that is necessary for an analyst, human or agentic, to get the complete picture.

WisdomAI treats the structured warehouse/lakehouse as the primary source of data, but agents can also access additional operations systems via Model Context Protocol (MCP) servers as first-class data sources. This arrangement allows the agentic system to combine warehouse data with live data from systems like Salesforce, Google Analytics, or Jira without requiring ETL pipelines to copy this data into the warehouse first.

Enterprise context layer

The context layer encodes organizational knowledge that the system needs to interpret questions and data correctly. This includes business definitions, metric calculations, data relationships, join logic, access policies, and institutional knowledge that typically lives only in analysts’ heads.

With WisdomAI’s Adaptive Context Engine (ACE), you can move beyond structural definitions like tables, joins, and metrics to capture organizational meaning — business policies, tribal knowledge, user preferences, and decision history.

ACE is bootstrapped from existing enterprise assets during onboarding by ingesting dbt (data build tool) projects, BI models, data catalogs, historical query logs, and documentation, making it useful from day one without requiring customers to manually specify all this knowledge from scratch. It is then continuously refined through usage, with all changes validated before they take effect. This process results in 95% accuracy in complex enterprise data environments.

Agentic tools and orchestration

The tools layer provides the specific capabilities an agent can invoke, such as translating natural language to SQL queries, calling external APIs, and generating visualizations. The orchestration layer manages how these tools are sequenced, how intermediate results are passed between steps, and how state and context are preserved across a multi-turn analytical workflow.

Although today's leading LLMs are quite good at generating SQL and Python code, generating the right code for data analytics requires more than a capable model. WisdomAI applies explicit algorithmic control to how context influences code generation. Some of the key techniques the system uses include surfacing only what is relevant to the current query, decomposing complex requests into verifiable steps before any code is written, and adjusting plans based on execution feedback.

LLM engine

The LLM engine is the reasoning core of an agentic analytics system, powering the Reason → Act → Observe → Iterate loop that makes autonomous multi-step analysis possible. The LLM interprets intent, formulates a plan, generates the code or tool calls needed to execute it, and reflects on the results. Modern LLMs are remarkably capable general-purpose reasoners, but in enterprise analytics environments, their outputs are only as reliable as the context and constraints they operate within. An unconstrained LLM can “hallucinate,” producing confident, well-formatted answers that may be based on the wrong metric definition, join, or entire data source.

WisdomAI is designed to work with LLMs (like Anthropic, Google, and OpenAI) and allows organizations to bring their own API keys, treating the LLM engine as a configurable component rather than a fixed dependency. This way, enterprises retain control over which model processes their data and under what terms, an important consideration for organizations with strict data residency or privacy requirements.

The key architectural decision that WisdomAI enforces is that the LLM always operates under the guidance of wACE, which supplies the relevant schemas, metrics, relationships, and business rules at each step of the reasoning process. This means the quality of outputs is not solely dependent on which LLM is used but on the quality of the context that constrains it, creating a more stable and governable foundation for accuracy and trust.

Insight delivery

Agentic systems can deliver their outputs in several ways, depending on the needs and preferences of the users. That could be a conversational BI or chat interface with answers provided via automatically generated tables, graphs, and visualizations that are explained in plain language. Output could also come in the form of an AI-powered dashboards that update dynamically. For fully autonomous workflows, this can look like agents that deliver insights proactively without needing to be asked.

WisdomAI supports all three of these delivery modes. Outputs at every layer are explainable: agents document their reasoning, the data sources they used, and the assumptions they applied so that business users and governance teams can audit all of the agent’s conclusions. When appropriate, agents can go beyond delivering insights, triggering downstream actions in connected systems and closing the loop between analysis and execution.

Feedback loop

An agentic analytics system should not be static. Every interaction creates a signal that can be used to improve the system’s understanding of your data and your business. The feedback loop allows these insights to be captured, interpreted, and used to refine the context layer over time.

WisdomAI's feedback loop captures feedback signals from multiple sources: explicit user corrections and clarifications made during conversations, query evolution patterns such as edits and retries, and session-level friction signals like unresolved follow-up questions.

All of this feeds back into ACE, but every proposed change is benchmarked against a curated set of validated queries before it can be approved, ensuring that even well-intentioned human-approved updates don't inadvertently break something that was already working. All updates are attributed and versioned, so there is a clear audit trail of how and why the system's understanding evolved. This ensures that the system gets smarter over time without the risk of uncontrolled drift undermining the accuracy and trust you’ve built.

Context is the foundation of reliable agentic analytics

The future of data analytics is agentic analytics. It allows you to reason, investigate, and act autonomously on your behalf, so you can stop waiting for analysts to surface the right information at the right time, missing the signals that don't show up in predefined dashboards, and losing institutional knowledge every time a team member leaves.

However, your data is fragmented, your schemas are incomplete, and your business logic lives in places no LLM can see on its own. To get the most out of your data, you need to invest in how your business context is learned, validated, and maintained over time. If you're evaluating platforms for your organization, the first question to ask any vendor is: “How does your system learn and maintain enterprise context over time?”

To hear how WisdomAI answers this question, schedule a demo today.

Summary of key agentic analytics concepts

Concept | Description |

Agentic analytics | This term refers to AI agents that can reason over your data, monitor the business continuously, and surface insights without being asked. |

AI agents | AI agents are autonomous AI systems that are able to take actions to achieve complex goals. |

Context is what makes agentic analytics hard | Subtleties and ambiguities in your data and business definitions mean that most agentic analytics approaches fail, not because of weak LLMs but because of insufficient enterprise context. |

Natural language analytics across structured and unstructured data | LLM-based agentic analytics systems allow users to ask questions in plain business language and receive answers with clear explanations. They can also analyze unstructured text fields like comments and tickets, allowing you to derive business insights from free-form data. |

Autonomous exploration and proactive insight generation | Agents can monitor data continuously and proactively surface insights that may not be obvious from traditional dashboard monitoring, and when an alert is triggered, an agentic system can automatically begin investigating why. |

Multi-step reasoning | Complex business questions require agents to reason across multiple ordered steps, drawing on a context layer that knows which data sources to use, how to combine them, and which business rules apply. |

Continuous learning | Agentic systems can improve with user feedback. Your queries and corrections provide information that can be used to improve the system’s understanding of your data and your needs |

The architecture behind agentic analytics | An agentic analytics system comprises the following building blocks:

|